- Topic1/3

35k Popularity

24k Popularity

41k Popularity

8k Popularity

20k Popularity

- Pin

- 🎉 The #CandyDrop Futures Challenge is live — join now to share a 6 BTC prize pool!

📢 Post your futures trading experience on Gate Square with the event hashtag — $25 × 20 rewards are waiting!

🎁 $500 in futures trial vouchers up for grabs — 20 standout posts will win!

📅 Event Period: August 1, 2025, 15:00 – August 15, 2025, 19:00 (UTC+8)

👉 Event Link: https://www.gate.com/candy-drop/detail/BTC-98

Dare to trade. Dare to win.

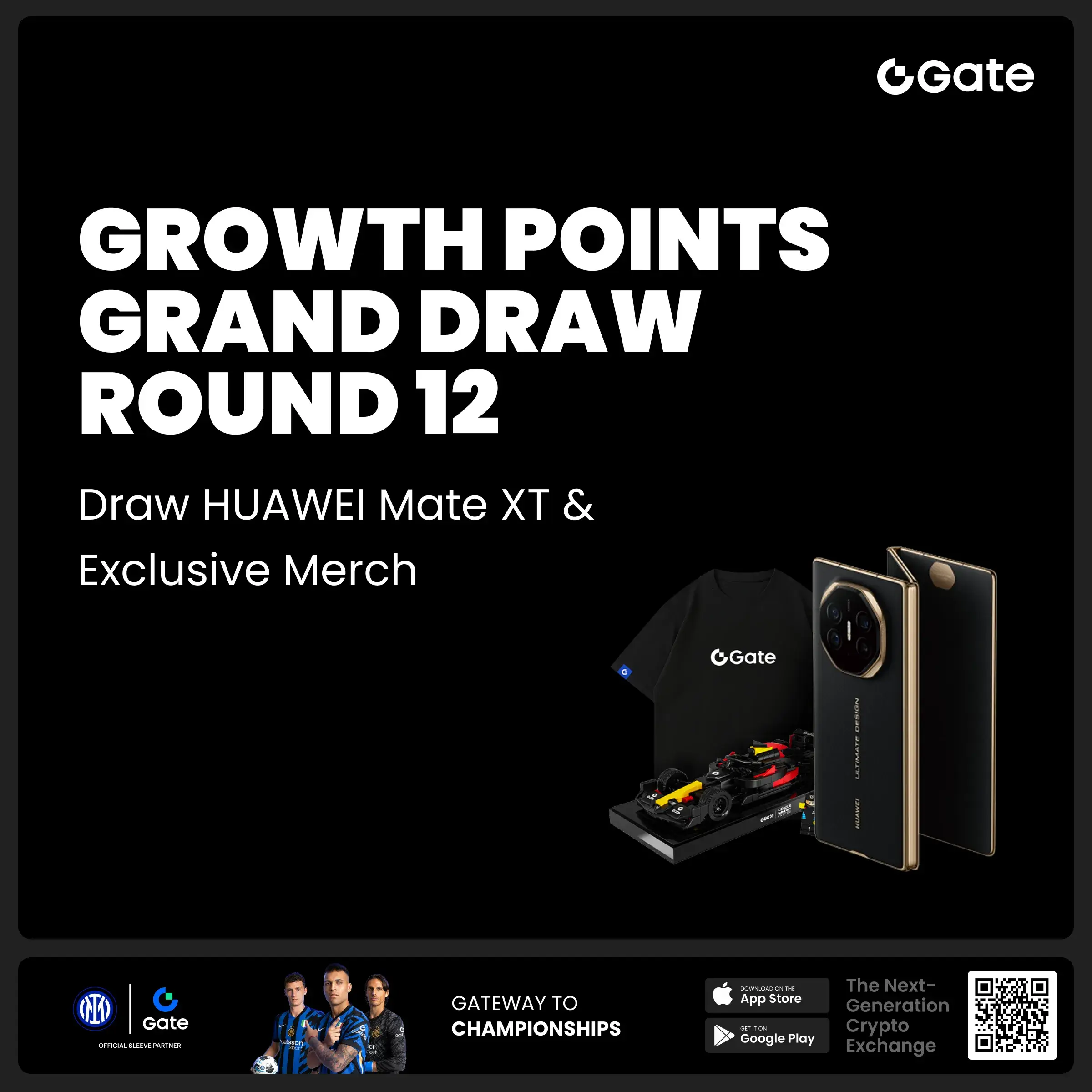

- 🎉 Gate Square Growth Points Summer Lucky Draw Round 1️⃣ 2️⃣ Is Live!

🎁 Prize pool over $10,000! Win Huawei Mate Tri-fold Phone, F1 Red Bull Racing Car Model, exclusive Gate merch, popular tokens & more!

Try your luck now 👉 https://www.gate.com/activities/pointprize?now_period=12

How to earn Growth Points fast?

1️⃣ Go to [Square], tap the icon next to your avatar to enter [Community Center]

2️⃣ Complete daily tasks like posting, commenting, liking, and chatting to earn points

100% chance to win — prizes guaranteed! Come and draw now!

Event ends: August 9, 16:00 UTC

More details: https://www

Google Open Source Gemma-3: Comparable to DeepSeek, Computing Power Plummets

Jinshi data news on March 13th, last night, Google (GOOG.O) CEO Sundar Pichai announced that the latest multimodal large model GEMMA-3 open source, featuring low cost and high performance. Gemma-3 has four sets of parameters: 1 billion, 4 billion, 12 billion, and 27 billion. Even with the largest 27 billion parameters, only one H100 is needed for efficient inference, which is at least 10 times more Computing Power efficient than similar models to achieve this effect, making it the currently most powerful small parameter model. According to blind test LMSYS ChatbotArena data, Gemma-3 ranks second only to DeepSeek's R1-671B, higher than OpenAI's o3-mini, Llama3-405B, and other well-known models.